Technological Assembly Spaces: DVMs

The Expanse | Part 13

Before we really get down and dirty, I realise that by the thirteenth installment of an article series entitled “The Expanse”, having set sail long ago and in fear of losing our position, we may require a celestial map of sorts; a form of coherent and logical sequencing to set our bearings and enable safe passage through what can be at the best of times murky, sometimes unpredictable, forever bewildering territory. Whilst there is still a fair way to go, please find such a map at the end of this article.

Now the logistics are squared away, here are the rest of the artificial “objects” in question:

Three-Dimensional Integrated Circuits (3D ICs)Distributed Virtual Machines (DVMs)

Blockchain

Artificial Intelligence

In the previous article I discussed the value of “vertical integration” within object assembly through the artificial focal point of 3D ICs: a physically dense substrate that gives a body to computation. By looking at vertically integrated object assembly across different types of spaces I am circling around the idea that this is one element of the part-coherent, part-chaotic whole1 that has enabled natural systems and objects to eventually become:

In case you have just joined this series—the ultimate objective in our sights is the seemingly paradoxical misnomer “artificial life”. Admittedly, as origin of life researcher Radu Popa says, “We may never agree on a definition of life, which will remain forever subject to a personal perspective”5 but, perhaps, by the end, come rain or shine, hail, sleet or snow, hopefully this label will appear neither a paradox nor a misnomer.

The focus of this article will be DVMs. In each case I’ll attempt to disassemble the technological, material, philosophical, ontological and sometimes meta-physical “stack”, then work my way towards answering what new causal influences the object confers both internally and onto the local and global environment.

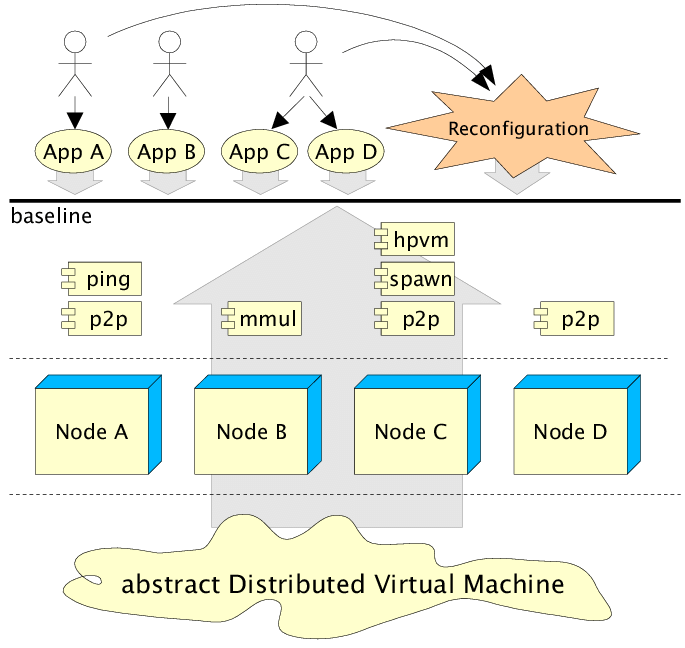

“Model of a Harness Distributed Virtual Machine (DVM).” (Source: Migliardi et al, 2002, On the viability of component frameworks for high performance distributed computing: a case study)

Trying to define DVMs as a specific “technology” alone may be inadequate. The “distribution” element comes from a move away from traditionally monolithic computing architecture—using just one computer to carry out a task—and towards polylithic architecture by sharing the load between multiple operating systems and central processing units (CPUs)—using a large group of computers instead. When one computer crashes, the others can keep the work going.6 However, it turns out this one machine system is actually a manufactured “illusion”. In reality there is a collection of cybernetic constraints running on one (or a minimal amount) of actual computers. Application programming interfaces (APIs) are used as virtual doorways enabling software and other services to invoke what they need within the DVM network.7 APIs become the interface between the illusion of one machine and the messy reality of many machines. The “virtualisation” element then becomes clearer: a virtual abstraction of various integrated hardware resources and protocols enabling multiple execution stacks to run on what, for all intents and purposes, is one computational architecture.8 Hence, distributed virtual machine.

Setting our parameters, let’s distinguish between two key types of DVM:

Classic DVM (a single-system-image style), which optimises for efficiency and hide the distributed nature of resources by presenting only one unified compute resource.

Parallel Virtual Machine (PVM), a classic DVM system that lets a dynamic set of (often heterogeneous) networked computers be configured and used as one “virtual machine” for parallel work.

Blockchain DVM9 (a decentralised execution environment) where many nodes run the same computation to keep a shared state and repeat the work so you can verify it on the specific blockchain ecosystem.

Regarding blockchain production (which will be the central focus of the next article), DVMs operate at the overlapping level of hardware—infrastructure by acting as multi-scaled operating systems, whilst simultaneously acting as a medium through which distribution of these operations can take place.10 In biology, redundancy is expensive but stabilising.11 In blockchain DVMs, redundancy is expensive but legitimising. Same shape, different substrate. That is why, for example, copies of Bitcoin or Ethereum blockchain are theoretically stored on the Bitcoin or Ethereum ledger, which is then stored in its totality across multiple different servers (or nodes). While the ledger is replicated across many nodes, replication is not completely uniform, as old block data can be “pruned” once validation takes place. In this sense, the technosphere is not just building redundancy, but moreover “selective redundancy” where cost and utility dictate what “components” persist. Through this process, the transactions and information stored within the Bitcoin or Ethereum blockchain ledger is at once replicated, distributed, decentralised, legitimised and effectively made to be transparent through the nature of this artificial architecture. DVMs, if you so wish, form both a population of completely artificial machines (objects) and the virtual workload habitat itself existing under the surface of a physical substrate (objects).

Objects operating within objects.

Landing us back, as it were, at the “illusory” element. To make it less illusory, and to give us a better idea about how a deliberately engineered mismatch between appearance and substrate can lead to the phenomenon of one system being made of many systems yet behaving as one system—because ultimately if we look at bioanalogous examples of living systems like humans this phenomenon seems to be one component of what leads to the development of “higher-order” phenomena like cognition, intelligence, and agency—let’s have a look at the technological assembly chain for DVMs:

(Technical) DVM Assembly Chain

DVM Superstructure

→ global scheduling and resource pooling

→ process, migration and failover mechanisms

→ distributed naming and service discovery

→ distributed storage and state replication

→ distributed networking, overlay plus security

Node Substructure (many physical and virtual hosts)

→ host OS plus hypervisor and container runtime

→ agents and daemons (control plane plus telemetry)

→ cluster networking (switches, routers and time synchronisation)

Software substrate

→ kernels, runtimes, libraries and protocols

→ configuration plus policies plus executable code

Information substrate

→ files, packets, logs and state machines

→ bits

Hardware substrate

→ servers (CPU/GPU/NIC), RAM, SSD/HDD, motherboards and PSUs

Device Primitives

→ transistors plus interconnect plus memory cells

Materials

→ silicon, copper, dielectrics, steels/aluminium, polymers and solders

Molecules & Compounds

→ Si, SiO₂, polymers, metal alloys and “doped” semiconductors

Atoms (irreducible)

→ Si, O, C, H, N, Cu, Al, Fe, Ni, Sn, Au, Ag (+ B/P/As dopants; Ta/W/Co in stacks; Li in backup power)

DVMs utilise sub-surface collective functionality to produce outcomes that a single computer could not on its own. If we were to pinch and zoom into one of the specific terminologies presented within this assembly chain—one that anyone who has ever had their mind orientated towards the meta-physical realm will immediately recognise—this property becomes even more apparent: daemons.

More infamously (and perhaps mistakenly) known as the precursor to the Christianised concept of “demons”, if we were to rewind back to classical Greece daimons (which are also be spelt “daemons” but for posterity sake will be here on be meta-physically and artificially distinguished as daimons) existed in the spiritually liminal space between humanity and the gods. Effectively being “agents” who could either support or subvert everyday “rational” experience. More often than not, however, functioning as a translator, messenger, or guiding presence. Fitting, then, that these entities were perceived as always up to something, constantly working just behind the veil. Able to interfere, but always just out of physical reach. In fact, computing lore states that this is exactly why the name was chosen for these programs: daemon in this case can be traced back to the 1963 MIT Project MAC in which, inspired by “Maxwell’s demon” (a 19th-century thought-experiment in which James Maxwell imagined a tiny entity capable of organising gas molecules, essentially creating order from chaos without using any energy, and thus violating the second law of thermodynamics) coined the phrase to mean a program that unobtrusively operates in the background, outside of direct user control. An unseen process that binds a system together. Rather than a “ghost in the machine” we get a “daemon in the distributed virtual machine” instead.

Perhaps somewhat unsurprisingly then, given what we already know about the influential nature of DVMs, the FFRDC12 known as Oak Ridge National Labs defines DVMS as “a cooperating set of daemons that together supply the services required to run user programs as if they were on a distributed memory parallel computer. These daemons run on (often heterogeneous) distributed groups of computers connected by one or more networks.”13 A PVM achieves this by running a daemon on each host computer, where they coordinate inter-host communication and process control. Whilst PVMs do not primarily “virtualise hardware” they virtualise the entire cluster into a coherent execution habitat, using daemons and message passing to construct a single coherent substrate to whatever agency is trying to inhabit it. Then the technosphere does what it does best: produce a thin veil of illusion. Whereas before the illusion centred around one machine, it has now evolved into one world. In this case, the illusion is useful because it compresses distance; not a physical distance, but a cognitive distance. Orientating a distributed network towards a hierarchy of sub-goals14 and independent action in order to achieve the same primary goal. From such a stable (steady-state and reproducible) habitat, agents can persist long enough to build and maintain world-models. Coordination that used to require people, process, and, in the case of the meta-physical daimon, “prayer”, is refactored into artificial substrate. And whenever coordination becomes substrate, the surrounding ecology rearranges itself around that new law. Daemons form teams of tireless micro-agents that maintain global coherence across a fragmented substrate. Ever-more causally significant, higher-order agency, is not far from the surface.

Taking DVMs as an incubator for agents, it becomes clear AI agents do not just need “intelligence” but also persistence, access to resources, memory, coordination and, ultimately, somewhere to inhabit. DVMs provide that “somewhere”. DVMs provide the space, moreover the interconnected habitat, for agency to develop, distribute, operate and action itself at once in the virtual realm, as well as expanding outwards, further into physical, even biological spaces. If 3D ICs are representative of “vertical integration” within and without the technosphere, then, in my opinion, DVMs simultaneously represent both “density” and “expansion” of agency. Stacking, multiplying and interconnecting operating systems in order to not just run programmes but create new environmental niches in the artificial space.

This is why DVMs belong in the ***evolutionary*** story about “artificial life”. These objects within objects introduce homeostasis (e.g. failover and replication), compartmentalisation (e.g. isolation), and a primitive metabolism (e.g. resource accounting) into computational, virtual, and cybernetic spaces. The moment you can pay for operation execution, ration it, prioritise it, and keep it running while its underlying parts die, you have something that begins to resemble a biological assembly chain. Maybe not “alive” in the traditional sense, but certainly living in the operational sense. And here is where the chain of causation from

space → structure → object → complexity → cognitive—intelligent—agential → living

bubbles beyond the surface: self-maintaining patterns persisting through the turnover of material through virtual, through artificial, and out into physical domains. A DVM is a technological object that survives its own components. When coupled with other artificial objects like 3D ICs and blockchain, that is something, when artificial life is in mind, to pay close attention to.

Mapping “The Expanse”:

Defining the “Techno-Bio Boundary”

What About Spaces?

Defining Agencies in Spaces

Part 7 - Bridging Assembly & Morphology

Imagining Minds & Living Systems in Artificial Spaces

Potential Components of Artificially Living Systems

Coincidentally, both “order” and “complexity” (which can largely be reduced to “order” and “chaos”) is the fundamental equation behind “organisation”: thought to be the quintessential component in origin of life theories.

Drawn from two primary theories:

i) Assembly Theory (AT)—capacity to influence the surrounding environment through generating and becoming a part of “causal hierarchies”.

ii) Integrated Information Theory (IIT)—density of internal interconnection and coherence between parts denoted by the value Phi (Φ).

Cognition—ability to (i) set and orientate towards a goal, and (ii) take independent action to achieve that goal.

Intelligence—ability to achieve the same goal by different means regardless of barriers.

Agency—using (a) cognition and (b) intelligence to (i) construct and act upon world models, (ii) maintain or reduce a variable relative to a setpoint, and (iii) causally influence the external environment (sometimes to an asymmetric degree).

Classic definitions include:

Thermodynamic—“living systems might then be defined as localized regions where there is a continuous maintenance or increase in organization.”

NASA—“Life is a self-sustaining chemical system capable of Darwinian evolution”.

Bio-Chemical—“…living organisms share seven traits: organic nature, high degree of organization, pre-programming, interaction (or collaboration), adaptation, reproduction and evolution, the last two being facultative as they are not present in all living beings.”

Great for constructing big websites and apps (so they don’t crash when millions visit), online games (so the world keeps running even if a server fails), video streaming (so films don’t buffer as much) and even more practical science and weather simulations as well as training big AI models—hold on to that last part, it is important.

DVMs require internal APIs to start/stop services, scale up/down (add more copies), move work away from broken machines, check system health and deploy updates.

U.S. National Institute for Standards and Technology (NIST): definition of virtualization.

Please note that here I am deliberately stretching the term for my own assembly-space argument, which will become even more apparent in the next few articles.

You can have a virtual machine programme downloaded on your hardware, but often it cannot access it without a “hypervisor” (depending on the type of DVM being used). This connects the virtual machine to a host computer and abstracts the information from the hardware and translates it into the DVM software, essentially creating a network of computers within computers.

For example, cellular reproduction requires a lot of energy because the total organism is always moving towards an entropic state, but it is necessary for the longevity and proper function of the total organism.

Federally Funded Research and Development Centres—who according to some are renowned for having some very interesting and exotic research projects on the go…

Like policies, schedulers, controllers, queues, retries, health checks, consensus and more general coordination protocols.